Last Updated on January 7, 2026

Python scripts can help you automate and improve the efficiency of your data analytics processes. Even a basic Python command will accelerate your work with data and Excel spreadsheets.

In this step-by-step tutorial, I’ll walk you through how to use Python and Pandas to consolidate multiple Excel or CSV files into one table. I will show you how to modify and transform data to your desired format. Finally, you will learn how to export the transformed data into one file. By the end of this tutorial, you’ll have become more familiar with Python, and you’ll see how beneficial it can be to your future data analysis.

You can download the three sample CSV files or the entire script that I’ll use during this tutorial so that you can follow along and practice the steps for yourself:

Data File 1

Entire Script for Consolidating and Transforming Data

Once you’ve downloaded these files, save them in a local folder on your computer so that they’re easy to access when you need them. Then, you’ll be ready to follow the next eight steps to data consolidation and transformation.

Note: If you’re more of a visual learner, scroll down to the bottom of this article and you’ll find a video version of this tutorial.

Table of Contents

Step 1: Install Python/Anaconda

If you don’t yet have Python on your computer, the first step is to install it. There are many different versions and ways to install Python programs, but one of the best options is Anaconda. Anaconda is one of the most popular and widely used programs by data scientists and analysts. This is a great open-source program, and it’s completely free – you can download it here.

Once you’ve installed Anaconda, create a new Jupyter notebook. We’ll use this to write the Python scripts to help us streamline the data consolidation process. Don’t forget to give your Jupyter notebook file a name — it will automatically save it in your folder.

Create a new Jupyter notebook with Python.

Step 2: Create Your First Python Script

Python programming might sound a little daunting, but lots of Python commands are pretty straightforward. So, before we jump into using scripts for data analysis, let’s introduce your first script: a print script, also known as a Python print() function.

As the name suggests, these scripts print the specified message on the screen. For example, type in print(‘Hello World’), then hit shift+enter. You will see the sentence just below the first window. Congratulations, you have written your first script. You can now officially call yourself a Python programmer!

A Python print script displaying “Hello World” in the first window.

After the print command, you can add #text. This is a comment that isn’t executable, so that it won’t affect your code. Comments are especially useful if you want to keep track of your Python code. For example, in the screenshot above, I’ve added #your first python command to the right of the command. You can also add comments above or below your scripts.

Step 3: Import a Pandas Module

Now that you’ve tried out your first Python script, it’s time to start the data consolidation process. But first, make sure you’ve downloaded and saved the three CSV files at the top of the page.

To load the CSV files into your notebook, you need to import a Pandas module, an add-on file extending Python’s basic functionalities. To do this, type “import pandas as pd”.

Python command to import Pandas module

You could write “import pandas”, but adding the “as pd” creates a shortcut reference. The shortcut will come in handy in the next steps. Once you’ve written this command, hit shift+enter to execute it.

Again, in the screenshot above, you’ll see I’ve added a comment with a reminder note about the “pd” shortcut. This doesn’t impact the command at all; it’s just for your benefit. It’s a good idea to get into the habit of using comments as often as possible. They will help you and any other users navigate the script and understand why certain commands have been used.

Step 4: Load File Content

Once you’ve executed the “import pandas” command, you need to load your data files and assign them to a variable. Think of a variable as a labelled memory location to store values. The next script you need to enter will load the first CSV file (data_file_one) into your notebook.

This command will read pd.read_csv (remember that “pd” is a shortcut reference to the “Pandas” module) followed by (‘/directory/file_name.csv’, sep=’;’). Rather than copying this command exactly, replace “directory” with the folder path where you store your files. This might be “C:\Documents\Files\” for example. You’ll also need to replace “file_name” with the name of your CSV file. For example, in our case, this would be “data_file_one.csv”. Your full command, with the correct directory and file name, should look something like this:

pd.read_csv( ‘/home/jacekpolewski/tutorial_files/data_file_1.csv’, sep=’;’)

The sep=’;’ section of the command tells Python that columns in the data are separated (or delimited) with a semicolon.

Execute this command (shift+enter), and the file data will be displayed below the command.

Step 5: Assign Data Content to a Variable

You can assign the file to a variable with your data loaded into the notebook. Write a name for this variable at the start of the command you’ve just written. Then, follow it by an equals sign before the rest of the command line. For example, I have used “data_1 =”, but what you want to call this variable is up to you. You can now hit Shift+Enter again, and your table should disappear.

Python command to load a file content into the notebook, assign a dataset to a variable.

Step 6: Inspect Data Using the Head() Function

After loading your data and assigning it to a variable, inspecting the data to see if all of the content has loaded correctly is a good idea. You can do this by looking at the first few rows of the data using the Python head() function.

All you need to do is type data_1.head() into your next command, and the first five rows of the data will open beneath the command.

You’ll notice the snippet of the data content will contain an extra column with index numbers, which was not part of the original CSV file. Python automatically adds an index column that contains a set of unique reference numbers assigned to each row (in the same way that Microsoft Excel assigns a unique number to a row and a unique letter to a column).

Inspect the first few rows of the file content with Panda’s .head() function.

Note: The technical term for the dataset in Pandas is a “data frame”. A data frame is structured like a table or a spreadsheet with indexes, rows, columns and header names. For simplicity, this tutorial will, for the most part, refer to DataFrames as datasets or tables.

Step 7: Consolidate the Three Datasets

Repeat steps 3-6 until you’ve loaded the three tutorial CSV files and assigned each dataset to a variable. When all datasets are loaded and assigned, you can start the data consolidation process (see screenshot below).

Read the remaining CSV files and assign them to Python variables.

We want to consolidate the three datasets into one data frame called data_comb, so type in data_comb = pd.concat([data_1, data_2, data_3], ignore_index = True) and hit Shift+Enter.

The pd.concat section of the command is what instructs Python to combine the files. All the data you want to combine should be within the square brackets. The ignore index function resets all of the index numbers. When the data files have been combined, a new index column is calculated for the new complete dataset. You will notice that index values are incrementally counted from 0 with no duplicates in the numbers.

You can then assign this new consolidated dataset a variable by typing data_comb = at the start of the command. Just like when you assign the previous datasets to variables, the table will disappear, and you can then inspect the data to check it has loaded correctly.

As well as using the head() function to inspect the first five rows and the tail() function to check the last five rows. Five rows are the default amount you’ll see. If you’d rather view more rows, you can insert a value such as 10 in the parentheses, and you’ll see the number of rows change.

Python script for consolidating data and inspecting file content.

Step 8: Further Checks and Validations

It’s a good idea to carry out a few further checks and validations to ensure the data is combined and loaded correctly, as errors can compromise the integrity of your data analysis.

One way to assess the accuracy of the data_comb dataset is to check the number of rows in each dataset. Add the numbers and compare them to the total number of rows of the combined dataset.

To do this, first type in and execute data_1.shape. You will notice two values enclosed in parentheses. The first value (100) corresponds to the number of rows, and the second (2) to the number of columns in the dataset. Add [0] to the end of the command to display just the number of rows so that it reads data_1.shape[0]. Then, to compute the sum of rows of each dataset, use the command data_1.shape[0] + data_2.shape[0] + data_3.shape[0]. In this case, the output value should equal 300.

To compare this with the total number of rows in the total dataset and make sure the datasets match up, type and execute the command data_comb.shape[0]. As a result, the output value should also equal 300. If it doesn’t, that’s the first sign that the data has not loaded or consolidated correctly.

Using Python commands to check that data is combined and loaded correctly.

Step 9: Data Transformation/Preparation

If you need to modify your data or transform it into your desired format before extracting valuable insights, Python and Pandas make it as fast and easy as possible to do so.

If you need to create a new column, type in and execute data_comb[‘Test’] = ‘hello’. When you inspect the data, you will notice a new column called ‘Test’ filled with the value ‘hello’.

Adding a test column to the consolidated dataset, filled with the values “hello”

You can also create new columns based on an already existing column. The data_comb contains a ‘Full Name’ column which consists of both ‘Last Name’ and ‘First Name’ values separated by a comma. You can separate these values into two columns by using a split function — data_comb[[‘Last Name’,’First Name’]] = data_comb[‘Full Name’].str.split(“,”, expand=True).

The left side of the above command encloses the columns’ two names to be created in a couple of double [] brackets. If you have more than one column, list the names in a [] bracket, hence the double signage. The .str on the right side of the command indicates that we will be operating on a string or a text column. The “,” in the command is the delimiter that separates the two name values and expand = True is explicitly responsible for adding those two new columns.

Once you’ve executed the command, inspect the new table again. You should see the ‘First Name’ and ‘Last Name’ columns on the right side of the table.

Splitting text to columns in Python using the split function.

If at any point, you need to delete columns — for example, you might want to delete the test column we just created — you can use the command: data_comb.drop(columns=[‘Test’], inplace=True).

Step 10: Using V-Lookup Equivalent in Python

Microsoft Excel’s v-lookup function is a must-use for any data analyst or scientist. You can use the .map() function in Python to imitate a v-lookup.

First, use the command data_comb[[‘day_no’, ‘month_name’, ‘year_no’]] = data_comb[‘Birth Date’].str.split(‘-‘, expand=True) to split the ‘Birth Date’ column into three separate columns. Inspect the data_comb DataFrame with .head(), and you will notice that the values in ‘month_name’ column are in text format.

Splitting columns and inspecting the DataFrame with .head()

For consistency, we want to create an additional column with the month_name value converted to its numerical equivalent. To do this in Microsoft Excel, you would create a separate table or a sheet. The first column would be filled with month names and the second with their numerical equivalents. You would then use a v-lookup formula between the lookup value and the table. In Python we can use the .map() function. First, we need to create a dictionary which, in this case, is equivalent to the table array part of the v-lookup:

month_lookup = {

‘Jan’: 1,

‘Feb’: 2,

‘Mar’: 3,

‘Apr’: 4,

‘May’: 5,

‘Jun’: 6,

‘Jul’: 7,

‘Aug’: 8,

‘Sep’: 9,

‘Oct’: 10,

‘Nov’: 11,

‘Dec’: 12

}

Notice that the values are enclosed in curly brackets {} and the lookup value (or the key value) is followed by “:” (which assigns it a relevant value), and each pair is separated with commas.

To create the new ‘month_no’ column, use the command data_comb[‘month_no’] = data_comb[‘month_name’].map(month_lookup). And, like we’ve done after most other steps, inspect the dataset to ensure the output works as expected.

Using the Python .map() function as an alternative to Excel v-lookup function.

Step 11: Export File to CSV, Excel and/or Clipboard

You’ve learned to consolidate and transform your Excel datasets in Python, so it’s now time to export your file.

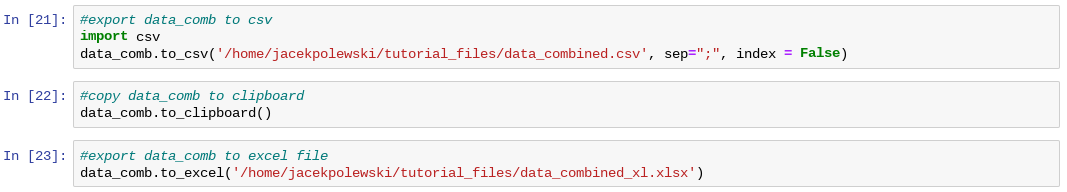

Firstly, type in and execute import csv to add a new module. To export the data_comb DataFrame, type in and execute command data.to_csv(‘path/filename.csv’, sep=’;’, index=False). Replace the path/filename with your own file address. The sep=’;’ separates columns in the exported files with “;” and the index=False ensures that the index column is not part of the output (use index=True to keep the index column).

You can also copy the table into a clipboard by executing the command data_comb.to_clipboard(). This allows you to open an empty notepad, Excel document or Google Sheet and simply paste the data in. Finally, to export the comb_data to Microsoft Excel, use the command data_comb.to_excel(filename). There is no need to define a sep argument in this case.

Exporting a DataFrame from Python to CSV, Microsoft Excel and clipboard.

Summary: From Data Consolidation to File Export

This tutorial has shown you how to import and combine individual data files using Python. To load a csv file, use a .read_csv() function, part of the Panadas module. Assign the file to a data frame and repeat the command for your other files. Then, combine the data using pd.concat(). Don’t forget to inspect the new dataframe using .head() or .tail() functions.

To add a new column in Python, type in the name of your dataframe (e.g. data_comb) and follow it by a column name enclosed in square brackets (e.g. data_comb[‘new_column’). Then, follow it with an “=” sign and your new value. For example, to add a new column to your dataframe and fill it in with some text (e.g. ‘test’), type in dataframe_name[‘column_name’] = ‘test’.

To create new columns from the existing data, you can use .split() function. For example, to extract first and last names separated by a from a ‘Full Name’ column, we typed in data_comb[[‘First Name’, ‘Last Name’]] = data_comb[‘Full Name’].str.split(“,”, expand=True). To map your existing data to a new set of values, create a dictionary, assign it to a variable and then use .map() function. For example, to translate month names to their numerical equivalents in our data, we executed the following scrip: data_comb[‘month_no’] = data[‘month_name’].map(month_lookup).

Python allows you to export your data into a file easily. To create a new csv file, import a csv module and then use .to_csv() function. For example, to export your dataframe, type in dataframe_name.to_csv(“file_path”, sep=”,”, index=False). To export data into an Excel file, use .to_excel() function, and to save it to a clipboard, use .to_clipboard().

Watch: Video Tutorial

You can watch the step-by-step tutorial on how to load, consolidate and export the data in Python in the video below.

Get in Touch

Hi, my name is Jacek and I love spreadsheets! I hope you’ve enjoyed reading this tutorial as much as I did writing it. If you have any questions about Python and data analysis, don’t hesitate to get in touch.

Hi, my name is Jacek and I love spreadsheets! I hope you’ve enjoyed reading this tutorial as much as I did writing it. If you have any questions about Python and data analysis, don’t hesitate to get in touch.

Explore my other tutorials to learn more about data and financial analysis. If you need further support, find out about my One-to-One Training and Data Analytics Services.

Learn More

Analyze and Forecast Customer Churn and Revenue – Build a churn/retention model in Microsoft Power BI using DAX, cohort analysis, and interactive forecasts.

Power BI Consolidated P&L & Forecast Tutorial — learn how to merge data from multiple entities, apply FX conversions, and create a complete forecasting model in Microsoft Power BI.

Learn How to Become a Self-Taught Data Analyst – Here, I share a few tips and resources I found using while learning to become a data analyst.

How to Analyse Data in Microsoft Excel with Power Query and a Pivot Table – This step-by-step tutorial will take you through an example of using Pivot Tables and Power Query to transform and analyse data.

How to Use Cohort Analysis to Calculate Retention and Churn Rate – A tutorial and an example of using Pivot Tables to analyse customer data and better understand users’ behaviour.